- ML - Home

- ML - Introduction

- ML - Getting Started

- ML - Basic Concepts

- ML - Ecosystem

- ML - Python Libraries

- ML - Applications

- ML - Life Cycle

- ML - Required Skills

- ML - Implementation

- ML - Challenges & Common Issues

- ML - Limitations

- ML - Reallife Examples

- ML - Data Structure

- ML - Mathematics

- ML - Artificial Intelligence

- ML - Neural Networks

- ML - Deep Learning

- ML - Getting Datasets

- ML - Categorical Data

- ML - Data Loading

- ML - Data Understanding

- ML - Data Preparation

- ML - Models

- ML - Supervised Learning

- ML - Unsupervised Learning

- ML - Semi-supervised Learning

- ML - Reinforcement Learning

- ML - Supervised vs. Unsupervised

- Machine Learning Data Visualization

- ML - Data Visualization

- ML - Histograms

- ML - Density Plots

- ML - Box and Whisker Plots

- ML - Correlation Matrix Plots

- ML - Scatter Matrix Plots

- Statistics for Machine Learning

- ML - Statistics

- ML - Mean, Median, Mode

- ML - Standard Deviation

- ML - Percentiles

- ML - Data Distribution

- ML - Skewness and Kurtosis

- ML - Bias and Variance

- ML - Hypothesis

- Regression Analysis In ML

- ML - Regression Analysis

- ML - Linear Regression

- ML - Simple Linear Regression

- ML - Multiple Linear Regression

- ML - Polynomial Regression

- Classification Algorithms In ML

- ML - Classification Algorithms

- ML - Logistic Regression

- ML - K-Nearest Neighbors (KNN)

- ML - Naïve Bayes Algorithm

- ML - Decision Tree Algorithm

- ML - Support Vector Machine

- ML - Random Forest

- ML - Confusion Matrix

- ML - Stochastic Gradient Descent

- Clustering Algorithms In ML

- ML - Clustering Algorithms

- ML - Centroid-Based Clustering

- ML - K-Means Clustering

- ML - K-Medoids Clustering

- ML - Mean-Shift Clustering

- ML - Hierarchical Clustering

- ML - Density-Based Clustering

- ML - DBSCAN Clustering

- ML - OPTICS Clustering

- ML - HDBSCAN Clustering

- ML - BIRCH Clustering

- ML - Affinity Propagation

- ML - Distribution-Based Clustering

- ML - Agglomerative Clustering

- Dimensionality Reduction In ML

- ML - Dimensionality Reduction

- ML - Feature Selection

- ML - Feature Extraction

- ML - Backward Elimination

- ML - Forward Feature Construction

- ML - High Correlation Filter

- ML - Low Variance Filter

- ML - Missing Values Ratio

- ML - Principal Component Analysis

- Reinforcement Learning

- ML - Reinforcement Learning Algorithms

- ML - Exploitation & Exploration

- ML - Q-Learning

- ML - REINFORCE Algorithm

- ML - SARSA Reinforcement Learning

- ML - Actor-critic Method

- ML - Monte Carlo Methods

- ML - Temporal Difference

- Deep Reinforcement Learning

- ML - Deep Reinforcement Learning

- ML - Deep Reinforcement Learning Algorithms

- ML - Deep Q-Networks

- ML - Deep Deterministic Policy Gradient

- ML - Trust Region Methods

- Quantum Machine Learning

- ML - Quantum Machine Learning

- ML - Quantum Machine Learning with Python

- Machine Learning Miscellaneous

- ML - Performance Metrics

- ML - Automatic Workflows

- ML - Boost Model Performance

- ML - Gradient Boosting

- ML - Bootstrap Aggregation (Bagging)

- ML - Cross Validation

- ML - AUC-ROC Curve

- ML - Grid Search

- ML - Data Scaling

- ML - Train and Test

- ML - Association Rules

- ML - Apriori Algorithm

- ML - Gaussian Discriminant Analysis

- ML - Cost Function

- ML - Bayes Theorem

- ML - Precision and Recall

- ML - Adversarial

- ML - Stacking

- ML - Epoch

- ML - Perceptron

- ML - Regularization

- ML - Overfitting

- ML - P-value

- ML - Entropy

- ML - MLOps

- ML - Data Leakage

- ML - Monetizing Machine Learning

- ML - Types of Data

- Machine Learning - Resources

- ML - Quick Guide

- ML - Cheatsheet

- ML - Interview Questions

- ML - Useful Resources

- ML - Discussion

Machine Learning - OPTICS Clustering

OPTICS is like DBSCAN (Density-Based Spatial Clustering of Applications with Noise), another popular density-based clustering algorithm. However, OPTICS has several advantages over DBSCAN, including the ability to identify clusters of varying densities, the ability to handle noise, and the ability to produce a hierarchical clustering structure.

Implementation of OPTICS in Python

To implement OPTICS clustering in Python, we can use the scikit-learn library. The scikit-learn library provides a class called OPTICS that implements the OPTICS algorithm.

Here's an example of how to use the OPTICS class in scikit-learn to cluster a dataset −

Example

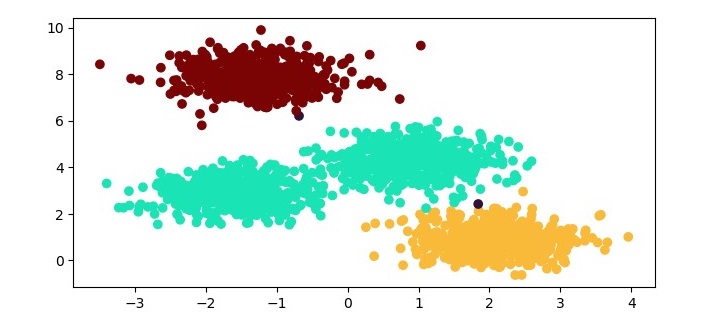

from sklearn.cluster import OPTICS from sklearn.datasets import make_blobs import matplotlib.pyplot as plt # Generate sample data X, y = make_blobs(n_samples=2000, centers=4, cluster_std=0.60, random_state=0) # Cluster the data using OPTICS optics = OPTICS(min_samples=50, xi=.05) optics.fit(X) # Plot the results labels = optics.labels_ plt.figure(figsize=(7.5, 3.5)) plt.scatter(X[:, 0], X[:, 1], c=labels, cmap='turbo') plt.show()

In this example, we first generate a sample dataset using the make_blobs function from scikit-learn. We then instantiate an OPTICS object with the min_samples parameter set to 50 and the xi parameter set to 0.05. The min_samples parameter specifies the minimum number of samples required for a cluster to be formed, and the xi parameter controls the steepness of the cluster hierarchy. We then fit the OPTICS object to the dataset using the fit method. Finally, we plot the results using a scatter plot, where each data point is colored according to its cluster label.

Output

When you execute this program, it will produce the following plot as the output −

Advantages of OPTICS Clustering

Following are the advantages of using OPTICS clustering −

Ability to handle clusters of varying densities − OPTICS can handle clusters that have varying densities, unlike some other clustering algorithms that require clusters to have uniform densities.

Ability to handle noise − OPTICS can identify noise data points that do not belong to any cluster, which is useful for removing outliers from the dataset.

Hierarchical clustering structure − OPTICS produces a hierarchical clustering structure that can be useful for analyzing the dataset at different levels of granularity.

Disadvantages of OPTICS Clustering

Following are some of the disadvantages of using OPTICS clustering.

Sensitivity to parameters − OPTICS requires careful tuning of its parameters, such as the min_samples and xi parameters, which can be challenging.

Computational complexity − OPTICS can be computationally expensive for large datasets, especially when using a high min_samples value.